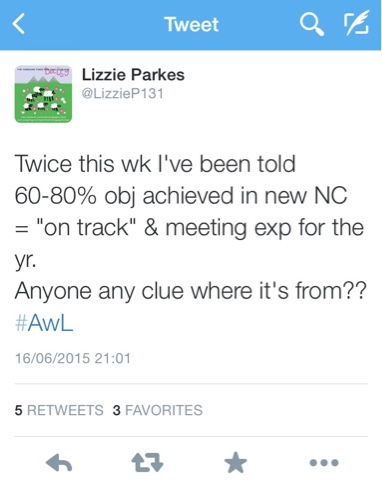

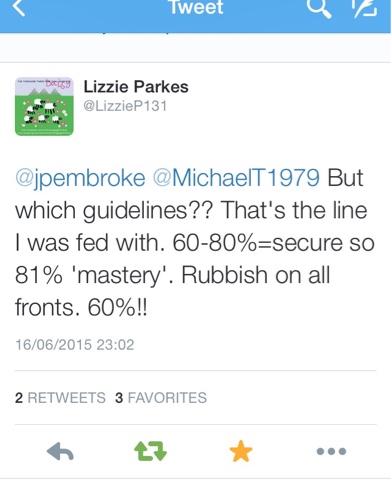

Last night @LizzieP131 tweeted this:

Which was followed by this:

In the past week I’ve been told by headteachers using one particular system that their pupils need to achieve 70% of the objectives to be classified as ‘secure’ whilst another tracking system defines secure as having achieved 67% of the objectives (two thirds). The person who informed us of this was critical of schools choosing to adjust this up to 90% and I’m thinking “hang on! 90% sounds more logical than 67%, surely”.

And then this comes in from @RAS1975:

Really?

Achieving half the objectives makes you secure?

It’s like a race to the bottom.

So, secure can be anything from 51% upwards. And mastery starts at 81%.

I’m sorry but how the hell can a pupil be deemed to be secure with huge gaps in their learning? And how can a pupil have achieved ‘mastery’ (whatever that means) when they only achieved 4/5th of the key objectives for the year?

It makes no sense at all.

This is what happens when we insist on shoehorning pupils into best-fit categories based on arbitrary thresholds: it’s meaningless, it doesn’t work and it’s not even necessary.

It’s also potentially detrimental to a pupil’s learning. Just imagine what could happen if we persist in categorising pupils as secure despite them needing to achieve a third of year’s objectives.

Ensuring that pupils are not moved on with gaps in their learning is central to the ethos of this new curriculum. Depth beats pace; learning must be embedded, broadened and consolidated. How does this ethos fit with systems that award labels of ‘secure’ despite large gaps being present in pupils’s knowledge and skills?

The more I look at current approaches to assessment without levels the more frustrated and disillusioned I become. System after system are recreating levels and we have to watch them. They may call them steps or bands but they are levels by another name and are repeating the mistakes of the past. Pupils are being assigned to a best-fit category that tells us nothing about what a pupil can and cannot do and risk being moved on once deemed secure despite learning gaps being present. This is one of the key reasons for getting rid of levels in the first place.

So, take a good look at your system. Look beyond all the bells and whistles, the gizmos and gloss, and ask yourself this: does it really work?

And please, please, please, whatever you do, make sure you….